Less than a year after creating a centralized "superalignment" safety group to steer the company away from possible negative impacts of artificial intelligence, OpenAI has disbanded the team, and two senior staff members who had been leading the effort both resigned last week.

The organization has been redistributing safety-related work across the company, Axios reported.

Co-founder Ilya Sutskever and senior researcher Jan Leike were appointed as co-leads of OpenAI's superalignment team when it was announced in July.

At that time, OpenAI said it would commit 20% of its compute to superalignment efforts but those resources never materialized and the team's work was stymied, according to a recent TechCrunch report.

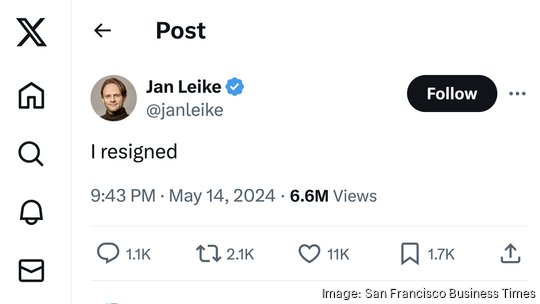

On Tuesday, Sutskever announced his resignation from OpenAI. Hours later, Leike was also out, announcing his decision on X with two simple words: "I resigned."

It was a stunning, but perhaps not entirely unexpected, turn of events for an organization that has publicly championed safety as part of its efforts to developed advanced general intelligence, or AGI.

Sutskever's resignation came six months after he voted alongside three other board members to fire CEO Sam Altman, an ultimately failed coup that solidified Altman's power with a new, handpicked board that no longer included Sutskever.

While Sutskever lost his board seat in November, he remained at OpenAI though his presence might have been a technicality on paper alone. He hadn't returned to work since Altman's swift return, according to the New York Times.

"After almost a decade, I have made the decision to leave OpenAI. The company’s trajectory has been nothing short of miraculous, and I’m confident that OpenAI will build AGI that is both safe and beneficial under the leadership of (Sam Altman), (Greg Brockman), (Mira Murati) and now, under the excellent research leadership of (Jakub Pachocki)," Sutskever wrote on X.

Pachocki was appointed as chief scientist upon Sutskever's resignation.

Leike was more frank about his concerns in a series of posts last week.

"I have been disagreeing with OpenAI leadership about the company's core priorities for quite some time, until we finally reached a breaking point," Leike wrote. "Over the past few months my team has been sailing against the wind. Sometimes we were struggling for compute and it was getting harder and harder to get this crucial research done,"

Leike also acknowledged the tension between OpenAI's product and safety teams.

"Safety culture and processes have taken a backseat to shiny products," Leike wrote.

Altman responded publicly to both Sutskever and Leike with appreciative posts, expressing sadness at their departures.

"Ilya and OpenAI are going to part ways. This is very sad to me; Ilya is easily one of the greatest minds of our generation ... OpenAI would not be what it is without him," Altman wrote.

He responded to Leike's announcement with an orange heart emoji and an acknowledgment about the need for further work on safety.

"i'm super appreciative of Jan Leike's contributions to OpenAI's alignment research and safety culture, and very sad to see him leave. he's right we have a lot more to do; we are committed to doing it. i'll have a longer post in the next couple of days," Altman wrote.

OpenAI's president Greg Brockman took to social media this weekend with a longer response about the organization's safet strategy.

"The future is going to be harder than the past. We need to keep elevating our safety work to match the stakes of each new model. We adopted our Preparedness Framework last year to help systematize how we do this," Brockman wrote.

"As we build ... we're not sure yet when we’ll reach our safety bar for releases, and it’s ok if that pushes out release timelines," Brockman continued.

The day before Sutskever and Leike resigned, OpenAI held a live demonstration of an updated version of ChatGPT, built with what the organization called its newest model GPT-4o, with the "o" standing for "omni," speaking to the company's ambitions beyond textual chat.

The update added voice capabilities to its flagship product, and the live demo featured a voice profile called "Sky," which netizens quickly associated with the dystopian 2013 AI film "Her" which starred actress Scarlett Johansson as the voice of an AI assistant.

Six days after the demo, OpenAI explained in a blog post that it paid several actors, not Johansson, to read scripts and record their voices for the chatbot's use, resulting in five initial voice profiles for use in the app: Breeze, Cove, Ember, Juniper and Sky.

In February, OpenAI also revealed a new video generation tool called Sora which it said could produce high-quality videos up to one minute long. For now, the tool is only available to a small group of researchers and artists to "assess critical areas for harms or risks" and "gain feedback," OpenAI said at the time.

The Sora tool apparently still requires a bit of human-level work in post-production, according to a blog called FX Guide.

FX Guide posted a behind-the-scenes look at how a short video called "Air Head" was created by Shy Kids, a film production studio that got early access to Sora.

"For high-end projects, it may be a while before it allows the level of specificity that a director requires. It will be more than ‘close enough’ for many others while delivering stunning imagery," FX Guide wrote. "Air Head still needed a large amount of editorial and human direction to produce this engaging and funny story film."